- AI Fire

- Posts

- 🧠 AI Understands The World

🧠 AI Understands The World

Intelligence gets real

Google just made voice AI sound… human. Meta is mapping brain signals from text. And LeCun? He just proved a tiny 15M model can simulate the physical world faster than models 50x larger.

What's on FIRE 🔥

IN PARTNERSHIP WITH HUBSPOT

Want to get the most out of ChatGPT?

ChatGPT is a superpower if you know how to use it correctly.

Discover how HubSpot's guide to AI can elevate both your productivity and creativity to get more things done.

Learn to automate tasks, enhance decision-making, and foster innovation with the power of AI.

AI INSIGHTS

Voice AI used to feel robotic. Slow replies. Lost context. Awkward timing.

Google just upgraded the experience with Gemini 3.1 Flash Live → faster responses, better tone understanding, and stronger multi-step reasoning.

Benchmarks show clear progress:

ComplexFuncBench Audio → 90.8% score

Scale AI Audio MultiChallenge → 36.1% with reasoning enabled

The model handles interruptions, pauses, and emotional cues better. It can detect frustration or confusion and adjust responses in real time.

Companies like Verizon, LiveKit, and The Home Depot report more natural conversations in production workflows.

Rolling out across:

• Gemini Live

• Search Live (200+ countries)

• Gemini Enterprise CX

• Google AI Studio API

All audio includes SynthID watermarking for safer AI content.

Why it matters: Voice is becoming the main interface for AI agents. As latency drops and conversations feel natural, voice moves from demo → real infrastructure.

PRESENTED BY WISPR FLOW

You think 4x faster than you type. Why slow down?

Wispr Flow turns your voice into ready-to-send text inside any app. Speak naturally and Flow handles the cleanup -- stripping filler words, fixing grammar, formatting everything properly.

For developers, this means:

Dictate into Cursor, VS Code, or any IDE with full syntax accuracy

Give coding agents 10x more context by talking instead of typing

Write PRs, docs, and Linear tickets without switching to a text editor

Respond to Slack and email without breaking your flow state

Used by teams at OpenAI, Vercel, and Clay. 89% of messages sent with zero edits. Millions of users worldwide.

Available on Mac, Windows, iPhone, and now Android - free and unlimited on Android during launch.

AI SOURCES FROM AI FIRE

1. Build private AI agents that actually work. Step-by-step OpenClaw workflow to connect Telegram, Discord, and local LLMs without fragile setups

2. Stop copying AI startup ideas. Use YC’s non-consensus framework to find weird opportunities that turn into billion-dollar companies

3. Forget basic chatbots, deploy a 24/7 AI employee instead. Learn Base44 Superagent prompts to automate Gmail, Slack, and daily workflows

TODAY IN AI

AI HIGHLIGHTS

🍱 OpenAI just introduced Codex Plugins that connect coding workflows with Slack, Figma, Notion, Gmail, and more. Reusable skills + MCP integrations turn Codex into a true automation hub. See how plugins work here.

🧠 Meta open-sourced TRIBE v2, a model that predicts human brain activity from text, audio, and video with zero-shot generalization. It simulates neural responses in seconds instead of months of lab work. Explore the research here.

⚡ Anthropic quietly shipped Claude Code Web, letting developers run remote coding tasks in the cloud, auto-fix PR issues, and monitor jobs from mobile. Parallel debugging just became real. Try it here.

💻 Ollama now integrates directly with VS Code, allowing local and cloud models to run inside GitHub Copilot Chat. You can switch between local Qwen or cloud models without leaving your editor. Setup guide here.

🎙️ Mistral released Voxtral TTS, an open-weight multilingual voice model with emotional speech control and low latency for voice agents. It rivals ElevenLabs while staying customizable. Listen to samples here.

💰 AI Daily Investment: Meta Platforms agreed to fund energy infrastructure for its $27B Louisiana data center, including seven natural gas plants, 240 miles of transmission lines, and battery storage. The project, supported by Entergy Louisiana, could scale to 5 gigawatts, making Mark Zuckerberg’s facility one of the largest AI-powered data hubs globally.

HOT PAPERS OF THE WEEK

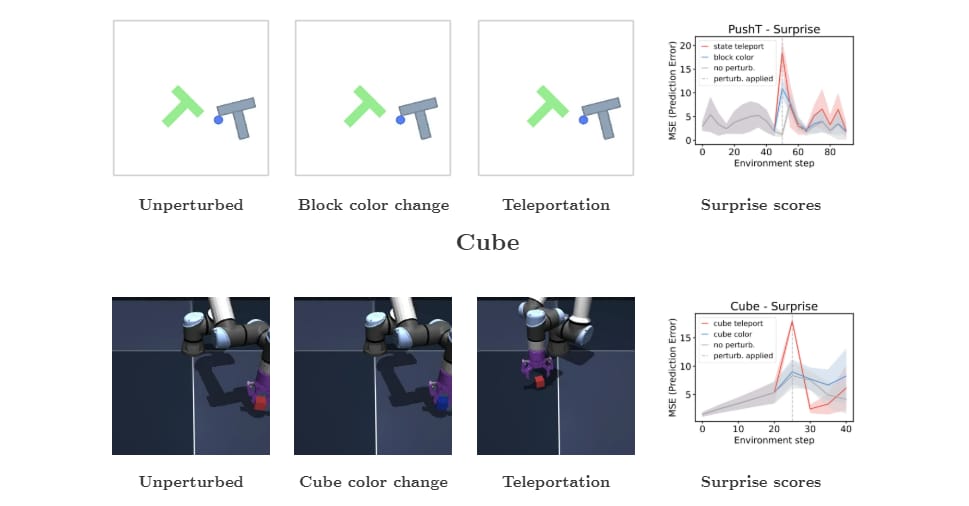

1/ LeWorldModel: Stable JEPA World Model from Pixels

Yann LeCun’s team trains a JEPA world model end-to-end on a single GPU using just two loss terms. Achieves fast planning and learns physical structure directly from pixels.

2/ Omni-WorldBench: Benchmark for Interactive World Models

Alibaba Group researchers introduce a 4D benchmark testing how models simulate actions across space and time, revealing major gaps in current world models.

3/ OpenResearcher: Open Pipeline for Deep Research Agents

Teams from University of Waterloo and Texas A&M University release a fully open pipeline generating 97k long-horizon research trajectories, improving deep-search agent accuracy by +34 points.

NEW EMPOWERED AI TOOLS

🖱️ Agentation turns UI annotations into structured context, helping AI coding agents understand what to fix.

🔧 Claude Code auto-fix automatically resolves PR errors in the cloud, keeping pull requests ready to merge.

📈 Cockpit AI deploys revenue agents that research leads, personalize outreach & book meetings.

🧩 Codex Plugins package skills and integrations into reusable plugins for scalable AI workflows.

AI BREAKTHROUGH

The "World Model" dream just got a serious reality check in the best way possible. Yann LeCun’s team just dropped LeWorldModel, and it’s basically a masterclass in "less is more."

Usually, these models try to take the easy way out by making every representation identical. LeWorldModel just... stopped using them. Instead, it uses a simple two-term loss function to keep everything stable:

Term 1: Predicts the future state accurately.

Term 2: Keeps representations distinct so the model actually learns something useful.

Most world models waste a ton of brainpower trying to predict every single pixel in a frame. But this predicts in latent space, focusing only on the essential features and ignoring the visual noise.

It solves complex tasks using just raw images

At 15M parameters, it’s a lightweight fighter compared to the usual giants.

For anyone dreaming of truly autonomous agents, this is the blueprint. It’s fast, it’s lean, and the code is already out there for us to play with. If we can get this much "physical intelligence" out of 15M parameters, the game for on-device AI just completely changed.

We read your emails, comments, and poll replies daily

How would you rate today’s newsletter?Your feedback helps us create the best newsletter possible |

Hit reply and say Hello – we'd love to hear from you!

Like what you're reading? Forward it to friends, and they can sign up here.

Cheers,

The AI Fire Team

Reply