- AI Fire

- Posts

- 🤷♀️ Is AGI Further Away Than We Thought?

🤷♀️ Is AGI Further Away Than We Thought?

Grab the OpenClaw Vault before it's gone

Is AI actually getting smarter, or is it just getting better at memorizing the internet? The results are a total wake-up call. Note: grab Free OpenClaw Mastery Vault below!

What's on FIRE 🔥

IN PARTNERSHIP WITH SALESFLOW

Run multichannel outreach across LinkedIn and email, without pricing penalties.

Your cost per seat drops from $99 → $24.99 as your team scales.

Built for teams that don’t plan to stay small.

AI INSIGHTS

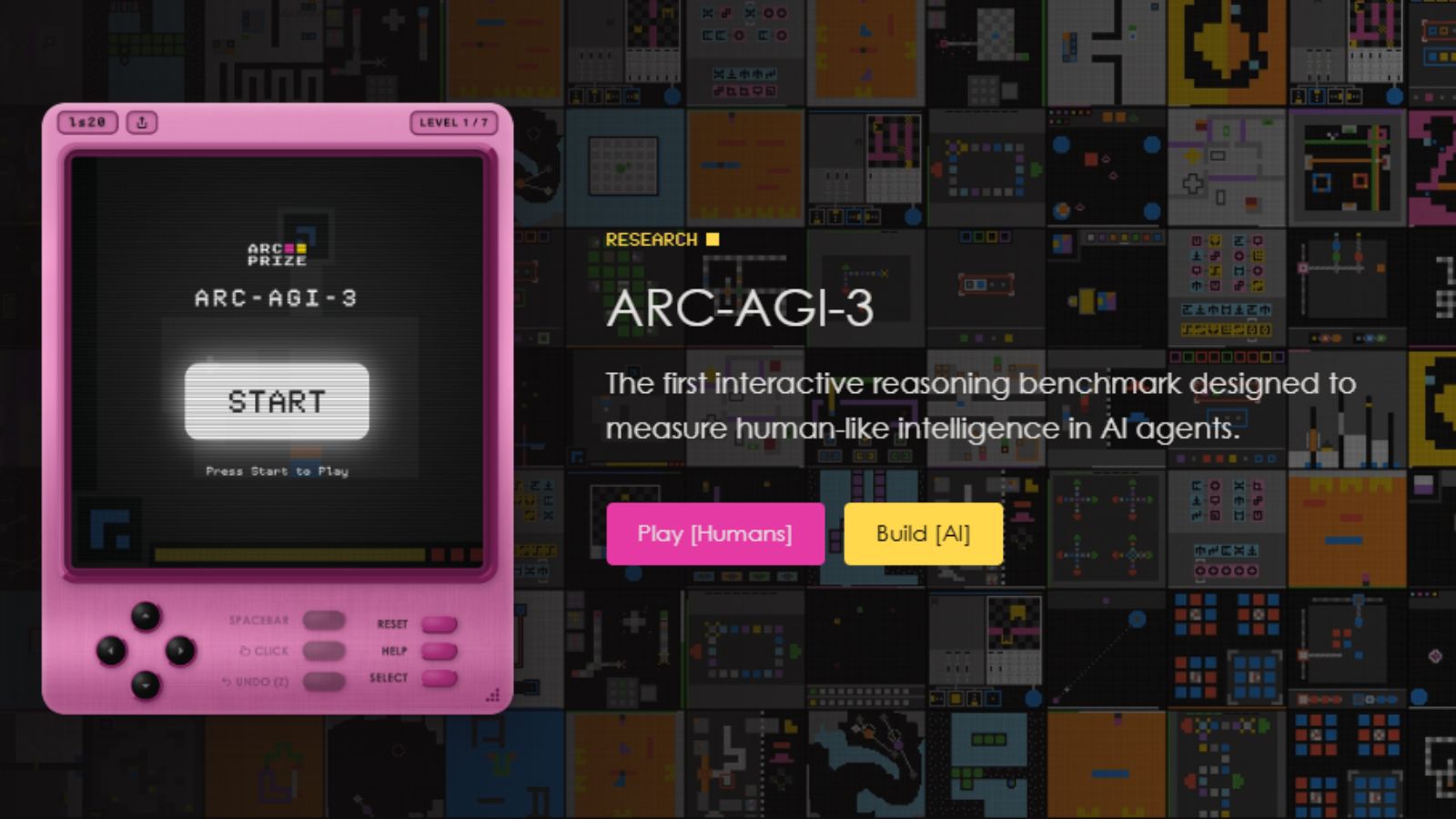

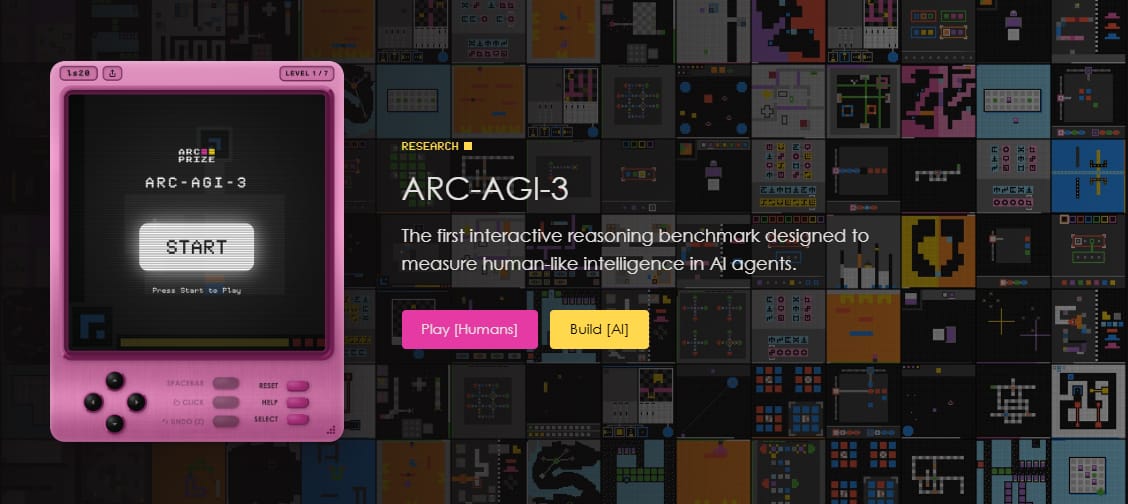

The ARC Prize Foundation just released ARC-AGI-3, a new benchmark designed to test whether models can adapt to completely new situations without prior training.

It focuses on adaptability, the core ability required for Artificial General Intelligence (AGI). The test includes 135 new environments & roughly 1,000 puzzle levels. Frontier models scored below 1% across the board:

Gemini 3.1 Pro: 0.37%

GPT-5.4: 0.26%

Claude Opus 4.6: 0.25%

Grok 4.2: 0%

The gap between human and machine adaptability is still massive. Humans solved every environment on their first attempt. AI models did not come close. You can play the public games yourself to see if they’re that hard, or not.

But not everyone agrees the test is perfectly fair. Some critics argue the scoring system intentionally produces very low numbers. AI must match or exceed human problem-solving speed to score well.

If a future model eventually passes ARC-AGI-3, it likely will not just be a larger version of today’s systems. It may represent a different type of intelligence entirely.

🎁 Today's Trivia - Vote, Learn & Win!

Get a 3-month membership at AI Fire Academy (500+ AI Workflows, AI Tutorials, AI Case Studies) just by answering the poll.

Which statement best reflects the ARC-AGI-3 takeaway? |

PRESENTED BY HUBSPOT

Want to get the most out of ChatGPT?

ChatGPT is a superpower if you know how to use it correctly.

Discover how HubSpot's guide to AI can elevate both your productivity and creativity to get more things done.

Learn to automate tasks, enhance decision-making, and foster innovation with the power of AI.

AI SOURCES FROM AI FIRE

1. How to ACTUALLY Find Unique AI Startup Ideas in 2026 (Not From Podcasts or Random Posts). Building is easy in 2026. Original thinking is rare. Let’s see how YC partners identify non-consensus ideas that later become billion-dollar companies.

2. Base44 Mastery Guide: A Safer AI Agent Runs a Full Business Alone (OpenClaw Alternative). Learn how to build a smart AI worker that runs your business 24/7. Step-by-step tutorial to automate tasks, workflows, and daily operations.

FREE OPENCLAW MASTERY VAULT

We’ve officially launched OpenClaw Workshop on Product Hunt today! To celebrate, we’re doing something a little "insane."

We’ve taken every single prompt, n8n configuration, and OpenClaw workflow we used in this masterclass and bundled them into a single Mastery Vault.

Usually, this is reserved for our Pro members, but for the next 24 hours, we’re giving it away to anyone who helps us climb the charts.

Click this link to visit our Product Hunt launch page.

Give us an upvote (it takes exactly 10 seconds).

Grab the Vault link immediately.

This exclusive file contains all we used in our record-breaking session, everything is organized so you can quickly replicate the setup, just copy, paste, and deploy.

|

TODAY IN AI

AI HIGHLIGHTS

🎓 If AI explanations feel either too basic or too technical, this Stanford course sits right in the middle. It balances clarity and depth. Save this course (videos included)

📂 Google launched switching tools to import chat history & memories from ChatGPT, Claude, and others into Gemini. Here’s how to do it in just a few minutes.

⚔️ Altman said he tried to “Save” Anthropic during Pentagon contract clash while accusing CEO Dario of undermining OpenAI for years → leaked Slack messages.

🍎 Apple may open Siri to rival AI assistants in iOS 27 to turn iPhone into a multi-model AI hub. Gemini, Claude, and others could plug in via new Extensions system.

🛡️ Reddit is testing bot labels, passkeys, optional World ID scans to separate humans from automation. AI posts stay allowed, but this will slow the surge of fake activity.

🏛️ Trump just appointed Mark Zuckerberg (Meta), Larry Ellison (Oracle) & Jensen Huang (Nvidia) to a new tech advisory panel focused on shaping future AI regulation.

💰 Big AI Fundraising: Nvidia-backed Reflection AI is raising $2.5B, targeting a $25B valuation to compete with Chinese AI. JPMorgan may join the round.

NEW EMPOWERED AI TOOLS

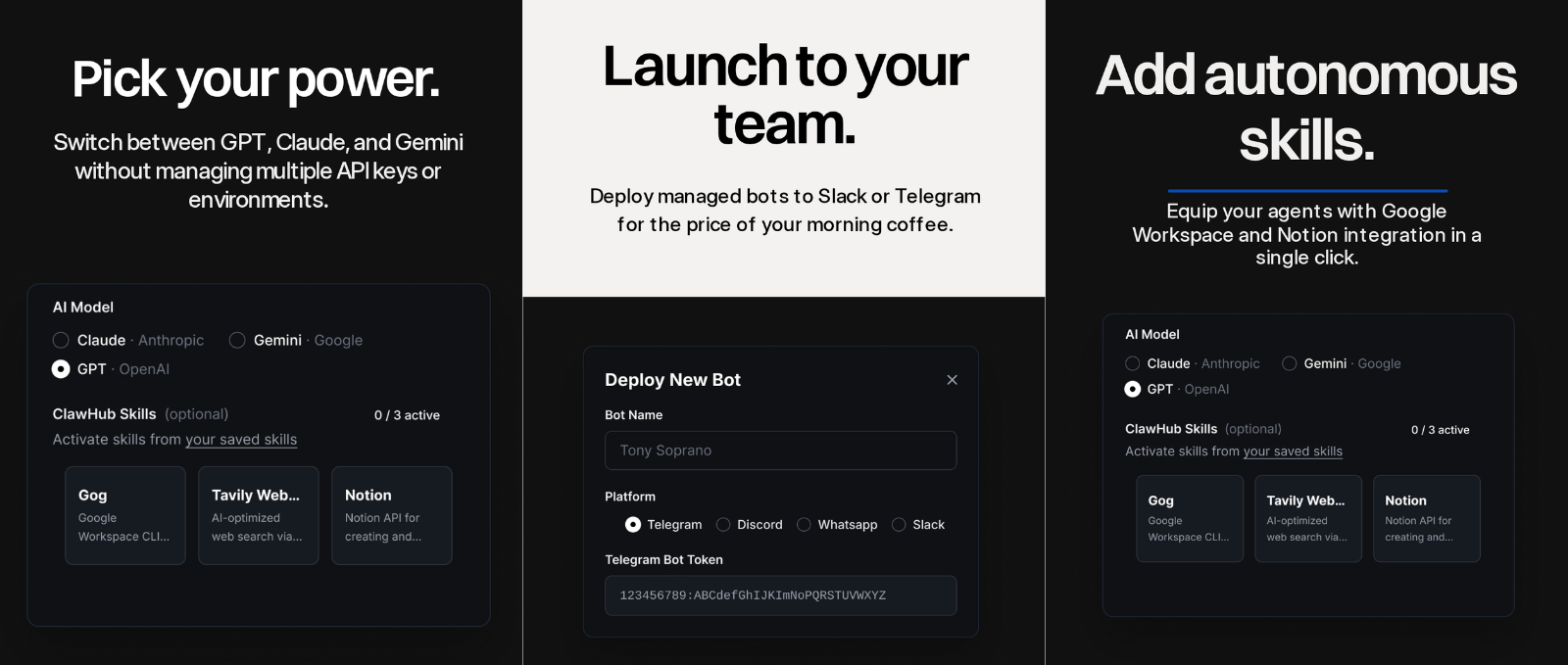

⚡ StartClaw turns OpenClaw into a ready-to-use AI employee that researches, scrapes websites, sends follow-ups, and updates tools automatically. No setup, no API keys. Try it here.

📱 Claude Mobile: Work Tools lets you explore Figma designs, create Canva slides, check Amplitude dashboards, all from your phone.

🎞️ Mokkit turns any screenshot into a scroll-stopping animated visual. Perfect for showcasing apps, websites in a professional & engaging way.

BIG AI DRAMA

Two big stories in Silicon Valley just collided:

LiteLLM was hit by malware hidden inside one of its software dependencies. LiteLLM currently has 40K+ GitHub stars and thousands of forks.

Attention is turning to Delve, the startup that helped LiteLLM obtain its security certifications.

The malware was discovered by researcher Callum McMahon after his computer suddenly shut down during installation. Some researchers, including Andrej Karpathy, believe the malware may have been quickly generated using AI (“vibe coded”).

LiteLLM developers responded quickly and are now working with security firm Mandiant to fully investigate the issue. Once inside a system, the malware tried to:

Steal login credentials

Access connected developer accounts

Spread into other open-source packages

LiteLLM displays security certifications (SOC2, ISO 27001) issued via AI compliance startup Delve, which has faced criticism over how reliable its certification process is. The company denies these claims.

We read your emails, comments, and poll replies daily

How would you rate today’s newsletter?Your feedback helps us create the best newsletter possible |

Hit reply and say Hello – we'd love to hear from you!

Like what you're reading? Forward it to friends, and they can sign up here.

Cheers,

The AI Fire Team

Reply