- AI Fire

- Posts

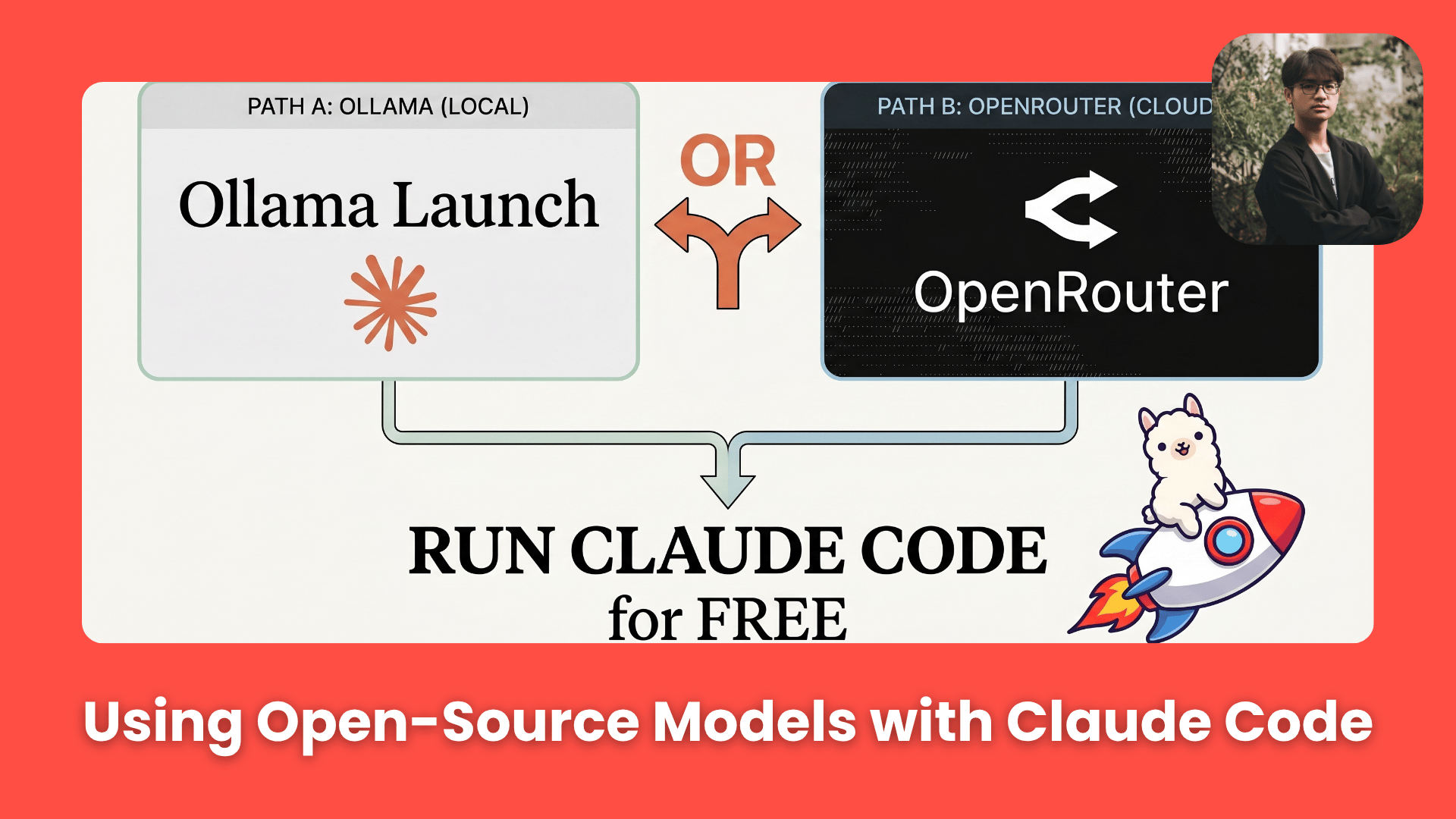

- 🤑 How to Use Claude Code 90% Cheaper Using Ollama + OpenRouter (Full Setup Guide)

🤑 How to Use Claude Code 90% Cheaper Using Ollama + OpenRouter (Full Setup Guide)

Engineering no longer requires a massive API bill. This guide shows how to run Claude Code using local and free models without losing its agent workflow.

TL;DR BOX

Claude Code is powerful but expensive if you run everything through Anthropic's paid models. Two smarter alternatives exist: running free, open-source models locally through Ollama or routing Claude Code's requests through OpenRouter's free cloud tier. Both methods let you keep Claude Code's full interface and agentic behavior while cutting ongoing costs significantly, sometimes by 50x or more depending on usage. The catch? Local models are slower, free cloud models have limits and one incorrect config line can cause Claude Code to fall back to paid Anthropic models without obvious warning.

Key Points

Fact: Claude Code's architecture separates the interface (the "car") from the model powering it (the "engine"), meaning you can legally swap in open-source models without breaking Anthropic's terms.

Mistake: Only overriding the main model field in OpenRouter's config. If you forget to override the Haiku and small-fast-model fields too, Claude Code falls back to paid Anthropic calls for tool use and quietly charges you.

Action: Start with OpenRouter Method B (Section V) if you want the fastest setup with no hardware requirements. Come back to the Ollama method once you want full local privacy.

Table of Contents

I. Introduction

Claude Code is one of the best AI coding tools available right now. But using Anthropic's frontier models for every single task, including the boring ones, is like hiring a Michelin-star chef to butter your morning toast. The results are good but the cost adds up quickly.

The good news is that Claude Code’s design makes this process flexible. The interface, tools, planning flow and agent behavior are all handled by Claude Code itself.

This guide walks you through 2 practical methods to make that swap:

running local open-source models through Ollama

routing Claude Code's requests through OpenRouter's free cloud tier

By the end, you will understand exactly how each works, when to use each one and where the traps are hiding. This guide is useful if:

You want to reduce Claude Code costs without losing the workflow.

You want to control token spending more predictably.

You want a flexible stack mixing premium and free models.

You want setup steps with minimal configuration mistakes.

Bonus: config examples + workflow checklist.

You’ve reached the locked part! Subscribe to read the rest.

Get access to this post and other subscriber-only content.

Already a paying subscriber? Sign In.

A subscription gets you:

- • Instant access to 700+ AI workflows ($5,800+ Value)

- • Advanced AI tutorials: Master prompt engineering, RAG, model fine-tuning, Hugging Face, and open-source LLMs, etc ($2,997+ Value)

- • Daily AI Tutorials: Unlock new AI tools, money-making strategies, and industry (ecommerce, marketing, coding, teaching, and more) transformations (with videos!) ($3,650+ Value)

- • AI Case studies: Discover how companies use AI for internal success and innovative products ($1,997+ Value)

- • $300,000+ Savings/Discounts: Save big on top AI tools and exclusive startup discounts

Reply