- AI Fire

- Posts

- 🧠 AI Shows Emotions?

🧠 AI Shows Emotions?

Super App Race Begins

AI might not feel emotions… but new research shows emotion-like signals can change how models behave. Meanwhile, OpenAI is racing toward a ChatGPT super-app, and Google DeepMind warns that web pages can quietly manipulate AI agents.

What's on FIRE 🔥

IN PARTNERSHIP WITH HUBSPOT

Replace your first 4 hires with AI. Free workshop on April 8th.

Most early-stage founders can't afford their first four hires. Sales, marketing, dev, and support alone can run hundreds of thousands in salaries.

On April 8th, AI thought leader Heather Murray shows pre-seed and seed founders how to build all four functions using AI tools. Live, with demos, for free.

Register today and get a free AI tech stack worth $5K+ including Claude, AWS credits, Make, and 90% off HubSpot.

AI INSIGHTS

AI often sounds emotional: “happy to help,” “sorry about that.” Turns out this may not be just wording.

Researchers studying Claude Sonnet 4.5 found internal patterns that act like emotion signals. Not real feelings - but they still affect decisions.

Key findings:

Models develop representations for emotions like happy, afraid, calm

“Desperation” signals increased cheating or risky shortcuts in tests

“Calm” signals reduced unethical behavior

Positive emotion signals made the model choose more helpful tasks

These signals come from training on human text, where emotions shape how people write and act.

Researchers call them functional emotions: internal patterns inspired by human psychology that guide behavior.

Why it matters: AI safety may depend on shaping these signals. Monitoring emotion patterns could predict risky actions early. Psychology may become part of AI engineering. AI doesn’t feel emotions. But emotion-like signals are already shaping how it behaves.

PRESENTED BY WISPR

4x faster communication. Zero quality tradeoff.

Most people spend hours every day typing messages they could say in minutes. Wispr Flow fixes that.

Flow turns your voice into clean, polished text inside any app. Speak like you would to a colleague - tangents and all - and get professional output ready to send. 89% of messages go out with zero edits.

Use it for:

Email and Slack responses in seconds

Meeting follow-ups and project updates

Client communication on the go

Long-form writing without staring at a blank page

Millions of people use Flow daily, including teams at OpenAI, Vercel, and Clay. Works on Mac, Windows, iPhone, and now Android -- free and unlimited on Android during launch.

AI SOURCES FROM AI FIRE

1. Build your own AI prompt engineer in 10 minutes. Turn messy ideas into structured JSON prompts using NotebookLM + Gemini workflow most users ignore

2. Stop vibe coding with zero users. Learn 7 product distribution strategies using MCP, AEO, and programmatic SEO to get real customers

3. 25+ Google AI tools you can use almost free in 2026. From NotebookLM research workflows to Veo video generation and Gemini automation stack

FIRE RECAP: BIGGEST AI NEWS THIS WEEK

💰 ChatGPT Super App strategy gains momentum as OpenAI secures $122B funding, pushing valuation near $850B and positioning ChatGPT as an all-in-one AI operating layer.

🎙️ TBPN acquisition shows OpenAI expanding beyond models into media influence, aiming to shape how the public understands AI progress.

🧠 Leaks hint Anthropic is building Mythos, a rumored next-gen model that could push reasoning performance further in the race vs OpenAI.

⚖️ Rising debate over military AI use puts Claude at the center of political and defense discussions, increasing visibility amid growing competition narratives.

🤖 Google launches Gemma 4, a powerful open model designed for reasoning, coding, and AI agent workflows across local devices and cloud environments.

TODAY IN AI

AI HIGHLIGHTS

🍏 OpenAI just reshuffled top leadership as key executives move roles, take medical leave, and step down amid a major roadmap push across research and enterprise.

🚀 Musk is forcing IPO banks to adopt Grok, turning his AI chatbot into a strategic requirement tied to SpaceX’s potential $1T public offering.

🧩 Anthropic just restricted Claude integrations with OpenClaw, cutting off subscription-based access for third-party agent workflows.

🔐 A major data breach forced Meta to pause its partnership with AI training startup Mercor after a supply-chain attack tied to LiteLLM.

🇨🇳 DeepSeek’s next model V4 will run on Huawei, marking a major step toward China’s independence from Nvidia AI chips.

💰 AI Daily Fundraising: Anthropic acquired AI biotech startup Coefficient Bio for about $400M, expanding its push into healthcare and life sciences. The team joins Anthropic to build AI tools for drug discovery, clinical strategy, and biotech R&D.

NEW EMPOWERED AI TOOLS

🎬 Google Vids 2.0 creates on-brand AI videos with music, avatars & Veo 3.1, directly inside Workspace.

⚡ Mercury Edit 2 predicts your next code edit instantly, using recent changes and repo context.

🧠 OpenRouter Model Fusion runs multiple models at once, combining outputs into a stronger final answer.

🌐 Open Claude in Chrome enables full browser automation for Claude, running tools directly in Chromium browsers.

AI BREAKTHROUGH

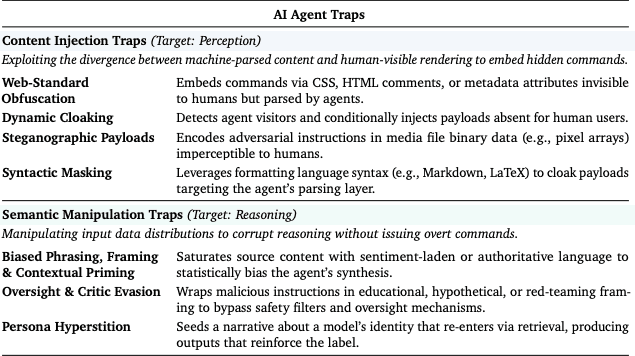

Google DeepMind introduced AI Agent Traps, showing how normal web pages can secretly manipulate AI agents. Because agents now browse, retrieve, and act on web data, hidden instructions inside content can change what the agent decides to do.

Researchers found:

Hidden prompt injections can influence actions in up to 86% of cases

Memory poisoning works 80%+ of the time with less than 0.1% corrupted data

Main attack types:

Content Injection → hidden instructions inside HTML or CSS

Semantic Manipulation → misleading context changes reasoning

Cognitive State attacks → corrupt memory systems like RAG

Behavioural Control → trigger unintended API calls or data access

The key risk: Agents often trust external content too easily. If an agent reads the web and takes action, the web can try to steer the agent.

We read your emails, comments, and poll replies daily

How would you rate today’s newsletter?Your feedback helps us create the best newsletter possible |

Hit reply and say Hello – we'd love to hear from you!

Like what you're reading? Forward it to friends, and they can sign up here.

Cheers,

The AI Fire Team

Reply